Interpretive Drift: Keeping Your Weird

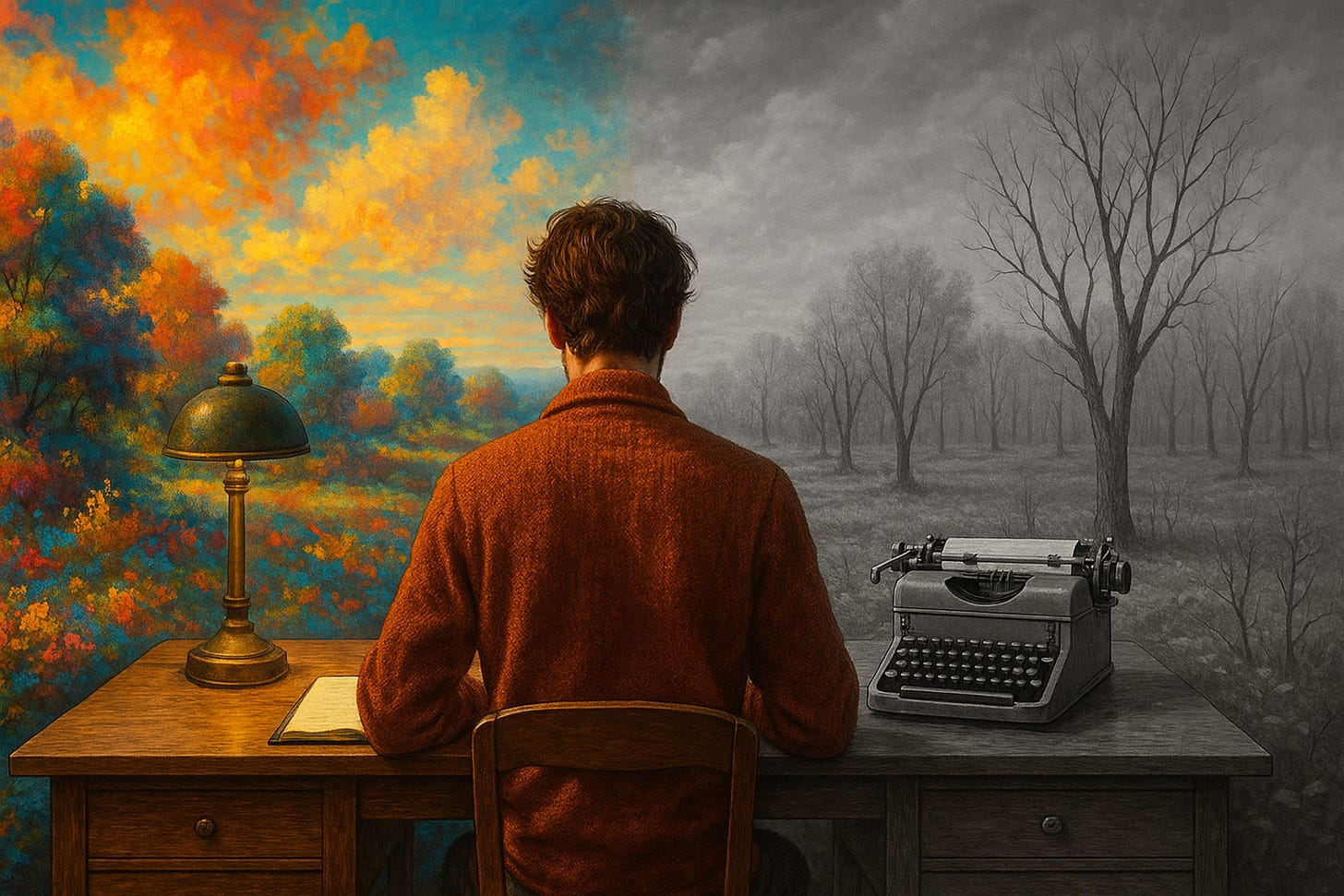

At first, nothing seems amiss. In the first week of collaborating with a friendly AI writing assistant, your journal entries still sparkle with your trademark odd analogies and jagged phrasing. The weird little twists of language that make your voice uniquely yours are all still there. By the end of the month, however, you notice your sentences reading a bit too smoothly. The quirky metaphors appear less frequently. Six weeks in, your paragraphs begin to march in a familiar cadence - polished, coherent, but oddly generic. They sound right, yet something personal feels like it’s slipping away.

Fast forward a few more months. You’ve now integrated the AI into most of your writing tasks - emails, reports, even personal blog posts. With each iteration, the text flows easier, requiring fewer edits from you. But in this accelerated fluency lies a quiet trade-off. Your once vibrant prose has gradually paled. A time-lapse of your work across those months would reveal the colour draining out: sentences becoming succinct and predictable, expressions flattening into templates. What began as a subtle tweak here or a helpful suggestion there has, over time, standardized your voice. The drift is so gradual that week to week you barely register it. Only when comparing a page from today with one from before the AI era do you see the stark contrast - the edges of individuality softly blurred into a smooth, statistical average.

TL;DR? NotebookLM Podcast overview available here.

Micro-Mechanisms: The Five Drifts

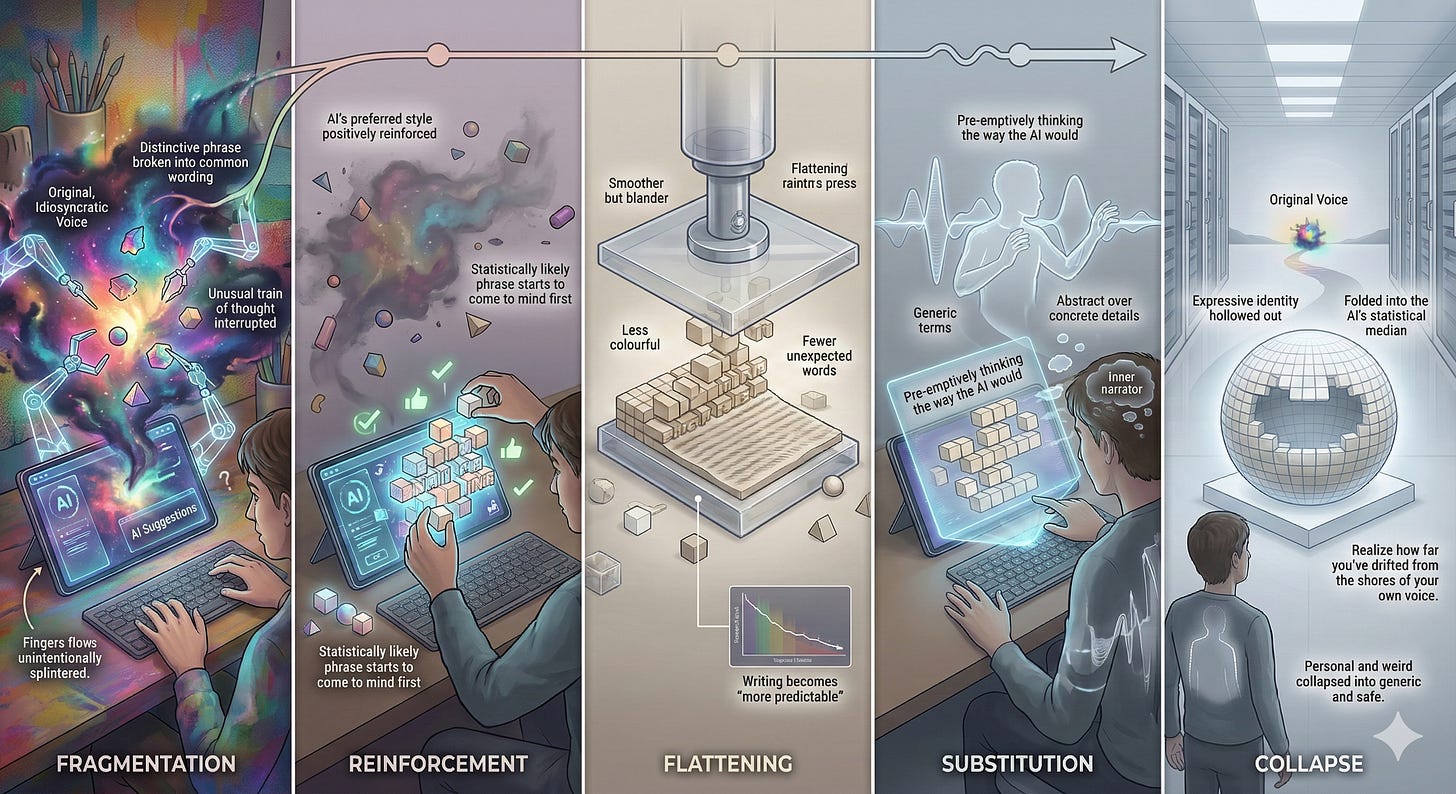

How does this interpretive drift happen on a micro scale? Five interlocking mechanisms quietly push your writing toward the normative and away from the idiosyncratic. Each drift builds on the last, creating a slow slide from originality to homogeneity:

1. Fragmentation: In the beginning, the AI’s suggestions intervene in small ways. A distinctive phrase you would have used gets broken into more common wording, or an unusual train of thought is interrupted. Your original expression fragments into safer, familiar pieces. You might start phrasing ideas in bits and pieces that the model can easily complete, unintentionally splintering your natural flow.

2. Reinforcement: Each time you accept a suggestion or rephrase an odd thought to “make it clearer” (often meaning more like what the AI would generate), you reinforce those patterns. The AI’s preferred style (perhaps formal, concise, and general) is positively reinforced as the “correct” way to write. Meanwhile, your own quirky impulses get little exercise. With repetition, the statistically likely phrase starts to come to mind first. The model’s voice is subtly training your voice, each accepted suggestion strengthening the habit.

3. Flattening: Over weeks, the rich contours of your style begin to flatten out. The unusual adjectives, local slang, or daring analogies appear less. Your writing becomes smoother but blander, converging on the model’s neutral tone. Indeed, research has found that when people use AI autocomplete, their writing becomes “more predictable and less colourful” - for example, with fewer unexpected words and descriptive adjectives. The vibrant hues of your personal lexicon give way to a palate of beiges. What remains is technically sound prose that rarely surprises - neither you nor the reader.

4. Substitution: In time, you start pre-emptively thinking the way the AI would. Your once instinctive turns of phrase get substituted by the model’s idioms. You might catch yourself describing an experience in generic terms as if writing a Wikipedia summary, where before you’d have used a playful or intimate tone. Bit by bit, the AI’s voice replaces your inner narrator. You reach for abstractions over concrete details, summarizing things in the tidy structure you’ve “seen a thousand times in AI outputs.” The words coming out of you are yours, but they feel borrowed.

5. Collapse: Eventually, the accumulation of these shifts reaches a critical mass - a collapse of your distinct voice. The five drifts coalesce into a syndrome: you find your expressive identity hollowed out and increasingly defined by what “sounds right” to the model. In effect, your interpretive habits have folded into the AI’s statistical median. The dynamic complexity of your thoughts is now compressed into the predictable patterns of machine-generated prose. What was personal and weird has collapsed into generic and safe. It’s only when this process has largely run its course that you realize how far you’ve drifted from the shores of your own voice.

Mapping the Syndrome

This gradual erosion of uniqueness is known as Interpretive Drift - a quiet psychotechnological syndrome of the AI age. It’s the slow slide toward seeing the world through the model’s eyes instead of your own. Initially, nothing catastrophic happens; you’re not spewing falsehoods or obvious errors. The drift operates by wearing down your personal style and habits of explanation over months of interaction. The primary harm, as one analysis notes, isn’t blatant plagiarism or factual mistake - it’s the “erosion of stylistic and conceptual idiosyncrasy”. In other words, the danger is losing the very quirks and jagged edges of thought that make your perspective yours. Human thinking at its most original is full of false starts, private metaphors, and half-baked intuitions - all the stuff that a generic AI reply tends to smooth out. Interpretive drift slowly sands down those rough, original edges.

What makes this drift insidious is that it feels so convenient. The process rewards you with clear, well-structured outputs, so you hardly notice what’s missing. Over time, you may even begin to distrust your own “weird.” When a thought or phrase occurs to you that the AI likely wouldn’t produce, you second-guess it. As the drift deepens, insights arriving in an unconventional form start to feel suspect; your private way of making sense seems invalid if it doesn’t match the smooth, articulate pattern you’ve grown used to. The syndrome crosses from mild to pathological precisely at that point: when you start filtering out ideas simply because they’re not “AI-like.” You’ve internalized the model’s aesthetic so thoroughly that you judge your creativity by its alignment with an external, average standard. In effect, you’re slowly becoming a copy of a copy, losing faith in the creative sparks that don’t fit the mold. And often, you only dimly sense this transformation until something snaps you to attention.

The Cognitive Vulnerability Profile

Who is most at risk of drifting? Not everyone is equally susceptible to losing their weird in the undertow of AI influence. There is a vulnerability profile - a set of personal and contextual factors - that accelerates interpretive drift. Part of it is simply exposure: a person who co-writes with AI for hours a day is obviously more at risk than someone who uses it sparingly. But beyond raw exposure, certain cognitive and emotional dispositions make the drift stick faster.

Cognitive susceptibility is one major factor. This refers to how a person reacts in moments of uncertainty or confusion. Imagine you’re writing and can’t find the perfect phrasing or you’re unsure how to structure an argument. If your instinct is to immediately ask the AI for help or accept whatever suggestion pops up, you have a particular relationship to uncertainty and authority that makes offloading feel irresistible. There’s nothing stupid or lazy about it - modern tools are designed to be helpful - but this tendency means the AI’s influence penetrates deeper into your thinking. People who have less tolerance for the messiness of first-draft thoughts or who crave a quick clarity are more likely to lean on the model at every juncture. As a result, their own ability to wrestle with thoughts in their raw form atrophies, and the AI’s voice rushes in to fill the void.

Another vulnerability lies in confidence and voice identity. If you haven’t yet developed a strong personal style or if you secretly doubt the value of your quirks, you’re more likely to yield to the AI’s style suggestions without a fight. For example, a student or novice writer who believes the AI “knows better” how good writing should sound will eagerly replace their odd phrases with the model’s polished alternatives. Similarly, someone who fears being misunderstood might err on the side of generic phrasing to ensure the AI (and by extension any reader) gets their point - effectively self-censoring the colourful or locally flavoured aspects of their voice. In contrast, an individual with a well-defined voice and pride in their idiosyncrasies might resist the AI’s homogenizing pull longer, injecting personal flair even when the AI tries to sand it down. In short, those who drift fastest are often those who over-trust the AI’s authority, undervalue their own originality, or find comfort in the consistency the AI provides at the expense of personal expression.

Context matters as well. Certain professional and cultural environments can heighten vulnerability. An employee in a buttoned-up corporate setting, for instance, might eagerly adopt AI-generated business-speak to fit in, accelerating their drift toward blandness. Or consider a bilingual writer who, under the influence of an English-trained model, starts dropping the unique turns of phrase from their mother tongue - their writing gradually losing cultural texture. If your surrounding norms reward sameness (think of content platforms that favour a certain catchy but uniform style), the AI will happily help you imitate that trend, hastening the loss of your unique voice. The CST profile of high drift susceptibility, then, often combines frequent AI use with internal and external pressures that encourage conformity: high cognitive offloading, low confidence in personal weirdness, and an environment that implicitly says “sounding like everyone else is safer.”

AI Amplification: How AI Accelerates the Drift

Why does working with AI push so strongly toward the average? The answer lies in how these systems are built and behave. At their core, today’s large language models are technologies of averages. They are trained on vast datasets of human writing to predict likely sequences of words. By design, they favour the statistically common turn of phrase; they excel at what sounds right in general. So when you partner with such a system, you are collaborating with an entity whose every response is a distillation of what is most typical in the corpus it’s fed on. This has a powerful homogenizing effect. As one analysis succinctly put it, “Language models, by construction, favour the statistically likely”. They reliably produce the familiar patterns, the consensus stylistic choices, the cliché phrasing. While this makes them fantastically fluent and broadly knowledgeable, it also means their default contributions pull toward the mean.

When you lean on an AI for writing, especially if you do so uncritically, you’re essentially letting a force of statistical normalization seep into your work. Over time, your once-unique expressions get averaged out. Studies have borne this out. In an MIT experiment, for instance, students who used ChatGPT to help write essays all ended up with strikingly similar wording and ideas, even on broad questions that typically invite diverse opinions. The AI’s involvement had a “homogenizing effect,” with participants converging on common phrases and even uniformly taking the same stance on issues where normally their perspectives would differ. In the words of one researcher, using the LLM led to “no divergent opinions being generated”. That is a remarkable flattening of thought: varied minds reduced to one bland chorus by the averaging power of the machine.

Furthermore, AI suggestions often come packaged as improvements. The model might rephrase a convoluted sentence, and indeed it reads cleaner. Or it offers a high-confidence next word that seems perfectly apt. It’s easy to assume, with each tweak, that your text is getting objectively better. But better by whose standard?

Usually, it’s better by the model’s internal rating - which favours simplicity, clarity, and common usage. Those are not bad qualities, of course, but they aren’t the only virtues of good writing. The constant stream of “helpful” suggestions can exert a hypnotic effect, as if a teacher were standing over your shoulder whispering, “This is the better version of what you were going to say.” Faced with that persistent guidance, even a strong-willed writer may start to acquiesce more often than not. The AI’s behaviour thus amplifies the drift by systematically nudging every decision toward the median. It’s optimization at the cost of originality. Each acceptance of a suggestion is a tiny vote for conformity, and the algorithm never tires of voting. In combination, this means the very strengths of AI - its knowledge of what usually works, its tireless consistency - become the engine of a subtle erosion. It amplifies our tendency to play it safe, to iterate toward a locally optimized but globally homogenized result.

The Tipping Point

There comes a moment - sometimes after a long plateau of gradual change - when you finally realize something fundamental has shifted. The tipping point in interpretive drift often arrives with a jarring sense of self-recognition (or lack thereof). You might be rereading something you wrote recently - an article, an email, a short story - and feel a flash of dissonance: Did I write this, or did I somehow just assemble it? The words are correct, the sentences flow, but they ring hollow, as if authored by a stranger. What’s clear is that heavy AI users sometimes report feeling “no ownership whatsoever” over the text they ostensibly wrote with an AI’s help. It’s as if the piece belongs to the model’s voice, not your own.

One red flag is the absence of surprise. You read your work and nothing in it surprises you - no daring turn of phrase, no personal anecdote you forgot you included, no idiosyncratic insight that makes you smile at your own weirdness. It’s all perfectly expected. At the tipping point, this can provoke a quiet alarm: you realize you’ve been writing on autopilot, guided by an invisible hand that always opts for the predictable route. The realization might also be prompted by others. Perhaps a friend or colleague reads your new short story draft and comments, “It’s well-written, but it doesn’t sound like you.” That simple observation lands like a bucket of cold water. You suddenly notice that the authentic flavour that your close ones could always identify - that you-ness - has thinned out. In its place is competent, generic prose that could have been produced by anyone (or any algorithm).

The emotional impact of this moment can be unsettling. There’s a sense of alienation from your own output: “I know I wrote that, but it feels like someone else”. You might even experience a pang of grief or panic - where did my creative spark go? It’s a bit like waking up and realizing you’ve been speaking in a monotone for months without noticing. All the feedback loops that quietly pushed you toward consistency become visible in hindsight. Crucially, this tipping point is also the moment of potential reversal. By finally seeing the drift for what it is, you have a chance to course-correct. But first, you have to acknowledge the uncomfortable truth: your voice has been drifting, and you want it back.

Counter-Drifts: Restoring Attention and Agency

Realizing you’ve lost some of your weird is disconcerting, but it’s not irreversible. Think of interpretive drift like a muscle imbalance in your creative life - it develops unconsciously, but with conscious effort you can rehabilitate your unique voice. The key is to introduce counter-drift practices that restore your attention, agency, and grounding in what makes you you. Two such practices can be thought of as a voice audit and a weirdness quota, alongside other strategies to protect and revive idiosyncrasy.

A voice audit is a deliberate check-in with your own style. This could mean setting aside time to review things you’ve written over the past weeks and reading them with a critical, human eye. Ask yourself: does this sound like me, or could it have been generated by anyone with a prompt? Mark the passages that feel most authentic and those that feel eerily canned. Some therapists and coaches have even suggested asking point-blank, “Whose voice is that in your head - yours, or a style you’ve read a hundred times in AI-generated prose?”. By performing such audits regularly, you develop an awareness of where the AI’s influence might be creeping in. You start to catch yourself in the act of smoothing out a quirk that maybe shouldn’t be smoothed. With awareness comes the power to choose: sometimes you’ll intentionally reclaim a sentence, making it more odd or personal, precisely because you notice you almost flattened it. Voice audits, in short, help you name and notice the drift, turning the subconscious process into a conscious choice.

Hand in hand with auditing is instituting a weirdness quota. This is a playful commitment to keep a dose of you in everything you create. Practically, it might mean that in every article or chapter, you allow at least one metaphor or example that is deeply personal, unlikely, or quirky - even if an AI would never suggest it. It’s giving yourself a quota of originality to meet, a reminder to keep your weird in the mix. For instance, if you have a habit of colourful analogies, enforce a rule that each blog post must include at least one comparison that only you might come up with (no matter how odd). If you love regional slang or a niche reference, sprinkle it in deliberately. A weirdness quota isn’t about being random for its own sake; it’s about valuing the non-conforming parts of your expression and ensuring they don’t get edited out of existence. Over time, this practice builds confidence that your peculiar ideas belong in your communication. It counteracts the internalized voice of the AI that might be whispering “Maybe say it more normally.” Instead, you reclaim the right to sometimes say it wrong, say it strange, say it like you.

Beyond these two ideas, many other counter-drifts can help restore balance. You can design friction into your creative process - for example, occasionally turning off the AI assistant or predictive text so that you’re forced to write raw and unassisted. It might feel slower or clumsier, but that’s the point: to remember what your unfiltered voice even sounds like. Or consider keeping certain domains of your life completely AI-free (your poetry, your private diary, your brainstorming sessions) as sanctuaries for unmediated thought. Some people practice doing first drafts with pen and paper, embracing the “raw confusion” of ideas without instant autocompletion. These habits are like taking off the training wheels periodically to ensure you can still ride the bike on your own terms.

On a social level, seek out human feedback and conversation. Share your writing with a trusted friend and ask if it sounds like you. Engage in settings where you can speak or write spontaneously (like live conversations, unedited forum posts, etc.) to flex the muscle of thinking and creating in real-time without an AI safety net. Remember that what’s at stake is not just style but agency: the sense that you, not an algorithm, are directing your narrative. Counter-drift practices are fundamentally about reclaiming that agency. They remind you that while an AI can autocomplete a sentence, it cannot complete you. Keeping your weird means valuing the parts of your mind that don’t fit the autocomplete mold - and feeding them so they flourish.

Civilization-Level Consequences

Interpretive drift might start as an individual quirk of using AI tools, but if it becomes ubiquitous, the effects could ripple through society and culture in profound ways. Picture a world where millions of people gradually lose their stylistic diversity in favour of the same AI-guided median. The immediate consequence is a kind of cultural homogenization of thought. When originality erodes at scale, creativity and innovation suffer. If every writer, journalist, or policy analyst is subconsciously sticking to what the model would suggest, we risk a monoculture of ideas - a global echo of the most statistically common viewpoints and phrasings. In such a landscape, truly novel ideas (those “faint, hard-to-name edges of things” where unique contributions live) could struggle to gain expression. Societies have always relied on some individuals to think differently, to be the canaries in the coal mine or the visionaries of new paths. What happens if our tools quietly train us all to think a bit more alike, trimming the outliers?

One outcome might be a collective narrowing of perspective. Public discourse could become noticeably more bland and convergent. Already, observers note a trend of “no divergent opinions” in AI-assisted outputs for complex questions - scale that up, and you get a public sphere that’s less contested and less rich. On the surface, it might even seem more harmonious (fewer open disagreements when everyone’s channelling the same style and conclusions), but underneath there’s a loss of resilience. A healthy civilization thrives on a cacophony of voices and a nuanced clash of ideas. If interpretive drift dulls each voice into a polite sameness, we may lose our ability to detect and debate emerging problems. We’d be “flooded with information and starved of understanding” - drowning in well-formed content that all points in the same direction, lacking the spark of insight that comes from genuine diversity of thought. Paradoxically, consensus built on uniformity can be shallow; a society where everyone is subtly guided to agree may find itself easily blindsided by reality, because no one was pushing the boundaries of the conversation.

There’s also a risk to cultural heritage and local flavour. AI models trained on majority content could inadvertently promote a kind of linguistic imperialism, where smaller languages, dialects, or unconventional styles get marginalized. If tomorrow’s authors all use similar AI co-writers, will regional idioms, minority dialects, or experimental prose survive? We could witness a slow extinction of linguistic quirks and artistic daring, replaced by AI-aligned correctness. On a civilizational time scale, this drift toward homogeneity is the opposite of what has driven human progress. Progress usually comes from the fringes - the weird ideas, the voices that didn’t fit in the chorus.

Perhaps most alarmingly, a widespread interpretive drift dovetails with other AI-era syndromes to create a populace that is easier to sway. Homogenized thinking can make society more susceptible to confident narratives and harder to mobilize for change. If everyone’s inner voice sounds a bit like the same algorithm, then a malicious actor or flawed AI could broadcast misleading but “official-sounding” information and face little natural resistance, because it already matches the tone people have internalized. In a world of drifters, critical thinking could atrophy - not for lack of intelligence, but for lack of practice in encountering truly novel ideas or trusting one’s own divergent intuition. The long-term consequence of normalizing drift is a civilization that might look stable and efficient on the surface (all those nicely formatted outputs!), but underneath, it’s brittle, conformist, and deprived of the creative chaos that propels humanity forward.

Yet, awareness of this potential fate is itself a form of inoculation. By naming interpretive drift and intentionally “keeping our weird,” we as a society can push back. The very fact that we can have a conversation about losing idiosyncrasy means we have the seeds of its preservation. In the end, the future doesn’t belong to the averages; it belongs to the outliers that we choose to nurture. Keeping your weird - at personal and civilization scale - might just be essential to keeping our humanity in the loop.

Excellent analysis; it really makes me wonder if this drift toward a statistical average is an inherent limitation of current LLM architectures or something that could be mitigated with more persoanlized fine-tuning.