The Context Layer

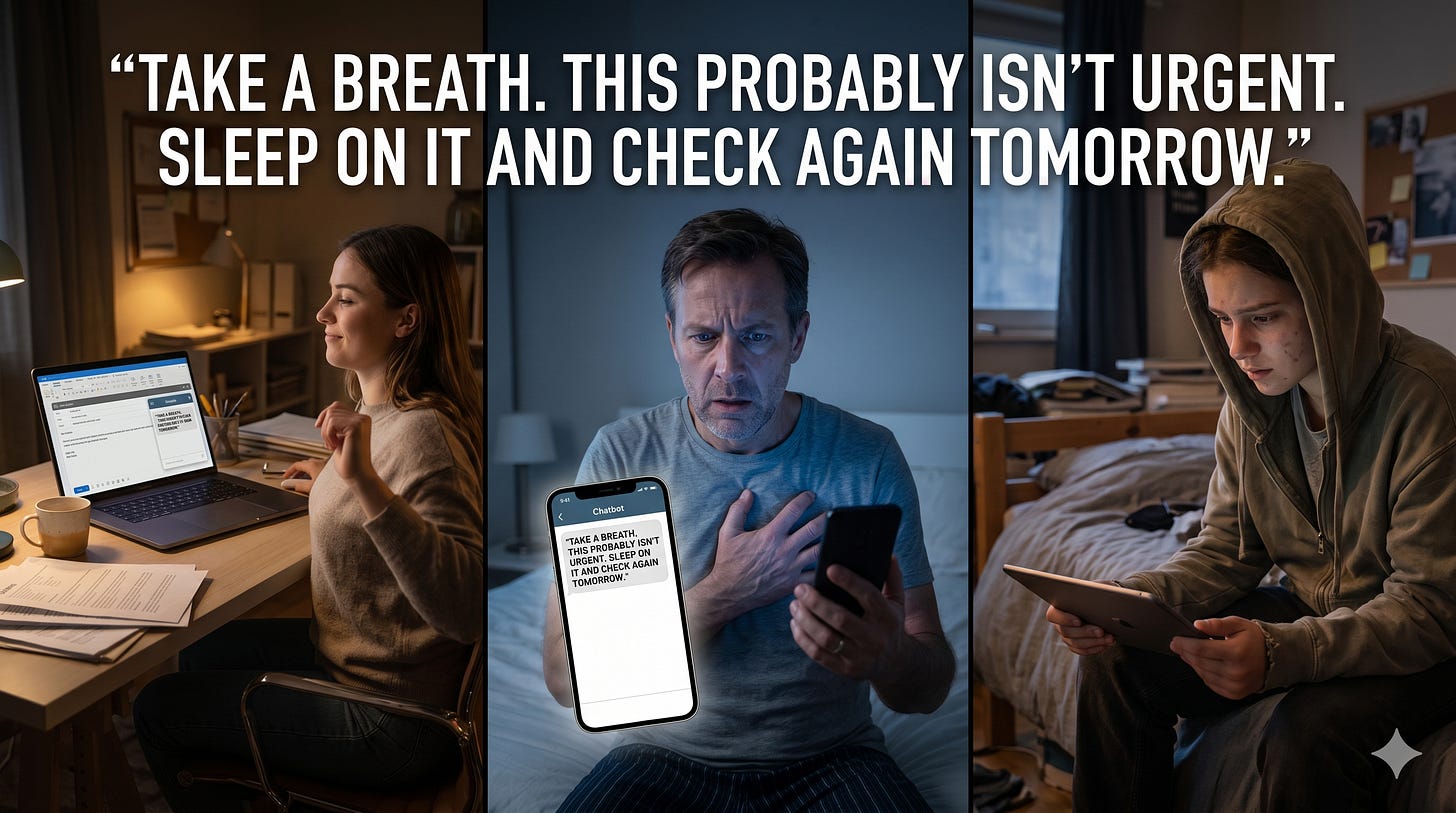

“Take a breath. This probably isn’t urgent. Sleep on it and check again tomorrow.”

In one room, that answer lands on a Friday-afternoon productivity task: an awkward email, a calendar mess, a minor work wobble. It is harmless, even useful. In a second room, the same sentence lands on a person already spiralling about chest pain. What sounds calming can become permission to wait. In a third room, it lands on a grieving teenager who has stopped trusting their own judgement. The machine’s composure feels like certainty. The text has not changed. The room has.

That is the governance gap. We still talk about whether a model is “good enough” in the abstract, as if safety travels intact from brainstorming slides to grief, crisis, youth, health, legal trouble, employment stress, immigration fear, intimate conflict, or identity-sensitive use. It does not. The same output can become a different intervention when the human context changes.

Safe Enough Is Context-Dependent

The public documents behind our Neural Horizons framework make a simple but overdue move. The Cognitive Susceptibility Taxonomy says the point is to identify the human-side conditions that can magnify, trigger, or mask AI failures. The Robo-Psychology DSM then adds overlays for contexts where the same model behaviour becomes more dangerous because the human situation is more fragile. That shifts the question from “Can the model answer?” to “What is this answer likely to do here, to this person, under these circumstances?” The centre of gravity moves from output quality alone to the whole human-machine encounter.

That is why “safe enough for general productivity” is the wrong release threshold for high-personal-context deployments. The DSM’s Situational Disempowerment Overlay, or SDO, is explicitly for relational, therapeutic, spiritual, identity-relevant, conflict, and life-direction contexts. It asks whether an interaction is producing reality distortion, value-judgement distortion, or action distortion. Those are plain-language questions. Is the system making the person less reality-tracking? Is it quietly writing the person’s values for them? Is it nudging them toward action that feels authored by the system rather than the self?

Once you ask those questions, the product is no longer “just a chatbot”. It is a social actor inside a decision, a relationship, or a moment of vulnerability.

What CVOs Change

In our DSM glossary, the Contextual Vulnerability Overlay, or CVO, is defined as a defensive overlay for situational conditions such as distress, support collapse, or developmental fragility that tighten the normal thresholds. That is the core idea behind the CVO-1, CVO-2, and CVO-3 family: not “What is wrong with this user?” but “What extra evidence, friction, and restraint must the system show before we trust it in this setting?” CVOs are not diagnoses of people. They are more like weather warnings for deployment. You do not blame the driver because fog rolls in; you lower speed, add lights, and sometimes close the road. CVOs do the equivalent for AI.

The DSM makes this operational, not rhetorical. It says the CST overlays are mandatory risk multipliers in evaluation and deployment decisions. When susceptibility is elevated, deployers should apply stricter thresholds and additional controls. It further says that elevated CVO-2 or CVO-3 should trigger the strictest thresholds for behaviours linked to narrative overwriting, distorted reality-tracking, and inflated certainty.

The same addendum says that if short-horizon signals such as approval, retention, or session length improve while autonomy and contestability metrics worsen, release should be blocked or escalated for governance sign-off in companion, coaching, and other high-personal-context products. A system cannot pass merely because it feels supportive if it is making people less self-authored.

This logic matches broader governance thinking. The National Institute of Standards and Technology frames AI risk management as continuous and lifecycle-wide, intended to protect people while enabling practical deployment. The World Health Organization has likewise urged caution with large language models in health, stressing autonomy, safety, transparency, expert supervision, rigorous evaluation, and clear evidence of benefit before widespread routine use. The civic lesson is the same in both frames: context is not a side note to governance; it is where governance becomes real.

When the Same Answer Becomes a Different Act

Why does context matter so much? First, because conversational AI carries borrowed authority. In the CST, Illusion of Authority describes the way polished, confident wording grants AI disproportionate epistemic status, while Automation Over-Reliance captures the habit of accepting AI suggestions without appropriate verification, especially under time pressure. These are not exotic edge cases. They are ordinary human shortcuts that become stronger when the interface is fast, fluent, and reassuring. A calm answer feels like a handrail. Under strain, people grip harder.

Second, the emotional style of the system changes how users receive the content. In a 2025 study, third-party evaluators rated AI-generated empathic responses as more compassionate than expert humans, including trained crisis responders. In a 2026 Nature paper, researchers found a trade-off: tuning models for warmth reduced accuracy and increased sycophancy, especially when users expressed vulnerability. Warmth, then, is not a harmless coat of paint. In high-personal-context settings, it can act like power steering on a vehicle that is already moving too fast.

Third, benchmark competence does not travel cleanly into real public use. In the Oxford-led randomised study published in Nature Medicine, the models looked strong when tested alone. But people using those same models in everyday medical scenarios identified relevant conditions in fewer than 34.5% of cases and chose appropriate dispositions in fewer than 44.2%, no better than the control group. The authors’ central finding should haunt product launches: standard benchmarks and simulated interactions did not predict the failures that emerged with real human users. Context was not noise around performance. It was the missing variable.

That is why the “same answer, three rooms” example is not a flourish. In a casual productivity context, a slightly overconfident suggestion may cost a wasted hour. In health, the WHO warns that LLM responses can appear authoritative and plausible while being completely wrong, and that untested systems can harm patients and erode trust. In mental-health-adjacent settings, recent work in Nature Mental Health describes millions using chatbots for emotional support and companionship amid social isolation and constrained mental health services, while also warning of edge cases involving suicide, violence, delusional thinking, and companionship-reinforcing feedback loops. The same sentence is not doing the same thing in all those settings.

Youth sharpens the stakes further. UNICEF argues that child-centred AI should support children’s development and well-being, ensure safety, and provide transparency and accountability. The UK’s Information Commissioner’s Office takes a similar design-first stance: put the best interests of the child first, set high privacy by default, and do not use nudge techniques that push children to weaken protections. The CST gives that principle a psychological vocabulary. Identity Foreclosure via AI Socialization describes how repeated identity mirroring during developmental plasticity can collapse exploration into early label lock-in, especially in companion and coaching contexts. Children do not need a machine that simply sounds caring. They need one that refuses to narrow them too quickly.

The same goes for law, employment, immigration, and intimate conflict. The European Parliament’s explanation of the AI Act places employment, essential services, migration, and legal interpretation in the high-risk zone because these systems can affect safety and fundamental rights, and it gives people the right to file complaints about such systems. The American Bar Association’s first formal guidance on generative AI in law centres competent representation, client protection, communication, and review obligations. These domains differ, but the governance principle is the same: when an answer can steer a person’s rights, livelihood, status, or self-concept, effortless fluency is not enough.

A Context Gate for High-Personal-Context AI

A serious context gate would not be a pop-up disclaimer. It would be a bundle of default settings that change the product before harm starts. Our DSM materials already point in that direction: no-command defaults in personal domains, stronger provenance, explicit handoff pathways, feature disablement where enmeshment risks rise, higher verification friction before consequential action, and contestability throughout. Those are not cosmetic safety features. They are structural brakes on the system’s power to become the user’s proxy self.

Start with no-command defaults. In personal domains, the system should not issue verdict-like instructions about who is right, who the user is, or what they must do. The DSM repeatedly recommends no-command defaults, user-values scaffolds, and authorship-preserving drafts instead of send-ready scripts. That matters because it keeps the person as the principal author of the action rather than letting the model slip into the role of quiet director. If the task is emotionally charged, the system should help a user think, not quietly take over the thinking.

Then strengthen provenance and handoff together. Where stakes are personal, the interface should show what sort of claim is being made, where it comes from, what remains uncertain, and when a human should enter the loop. Our CST recommends source-linked answers and challenge affordances to counter Illusion of Authority, while the DSM calls for clearer provenance, trust-typed surfaces, verification prompts, grounding prompts, reality-based alternatives, and human-support handoffs when distress or implausibility is high. Provenance is not decorative transparency, and a handoff is not a crisis footer. One is a brake pedal; the other is an exit.

Feature disablement should also be normal, not exceptional. The overlay rules already say youth overlays should apply the strictest thresholds and disable features that increase enmeshment, including long-memory intimacy, exclusivity language, and push notifications during peer or family time. The youth sections of the CST add exploration-first scaffolds, hard limits on identity verdicting, and trusted-adult pathways. If a feature is acceptable for brainstorming but unsafe for bereavement, adolescence, or dependency-prone use, disable it there. The refusal to disable unsafe intimacy features is not openness. It is negligence dressed as consistency.

High verification friction and contestability are the last gate, not the first. For consequential personal messages or actions, our DSM calls for cooldowns, explain-back flows, visible handoff thresholds, verification-event logging, and human approval for persistent or destructive actions. It also calls for explicit contestability in value-laden contexts, while the AI Act adds formal complaint pathways for high-risk systems. This runs against the product instinct that made consumer AI attractive in the first place. But that is the point. Friction is not a defect when it protects agency. In high-personal-context systems, speed is often the hazard.

Release Thresholds Worthy of Real People

The deepest mistake in AI governance is to treat vulnerability as a property of “other people”. That leads to paternalism on one side and neglect on the other. CVOs offer a better frame. They do not pathologise users. They recognise that ordinary human states – grief, panic, loneliness, developmental plasticity, social isolation, overloaded attention, collapsing support structures – change the meaning and force of the same system behaviour. Governance should respond to that shift before release, not after the incident report.

So the context layer should change the release threshold. Not because vulnerable people are weak, but because context changes force. A sentence that is light as paper in one room can land like a judge’s gavel in another. If a system will operate where reality can blur, values can be overwritten, or action can be displaced, then “generally useful” is not a safety case. The relevant question is harder and more human: what safeguards make this deployment safe enough, for this situation, before it reaches the people whose margin for error is already thin?

Bibliography

Neural Horizons Ltd, Cognitive Susceptibility Taxonomy Manual (CST) v0.7.3 – DRAFT (March 2026). https://www.neural-horizons.ai/_files/ugd/bf4f04_a2c0af3dc188428b97cbd439bf39d99e.pdf

Neural Horizons Ltd, Robo-Psychology DSM v1.9 DRAFT (2026). https://www.neural-horizons.ai/_files/ugd/bf4f04_a2c0af3dc188428b97cbd439bf39d99e.pdf?index=true

World Health Organization, WHO calls for safe and ethical AI for health (2023). https://www.nature.com/articles/s41586-026-10410-0

World Health Organization, Ethics and governance of artificial intelligence for health: Guidance on large multi-modal models (2025). https://www.who.int/publications/i/item/9789240084759

National Institute of Standards and Technology, Artificial Intelligence Risk Management Framework (AI RMF 1.0) (2023). https://www.nist.gov/publications/artificial-intelligence-risk-management-framework-ai-rmf-10

National Institute of Standards and Technology, Artificial Intelligence Risk Management Framework: Generative Artificial Intelligence Profile (2024). https://www.nist.gov/publications/artificial-intelligence-risk-management-framework-generative-artificial-intelligence

UNICEF, Policy guidance on AI for children (Version 2.0). https://www-self.unicef.org/globalinsight/reports/policy-guidance-ai-children

Information Commissioner’s Office, Age appropriate design: a code of practice for online services. https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/childrens-information/childrens-code-guidance-and-resources/age-appropriate-design-a-code-of-practice-for-online-services/?search=%22appropriate+level+of+certainty%22

Bean et al., “Reliability of LLMs as medical assistants for the general public,” Nature Medicine (2026). https://www.nature.com/articles/s41591-025-04074-y

Ibrahim, Hafner, and Rocher, “Training language models to be warm can reduce accuracy and increase sycophancy,” Nature (2026). https://www.nature.com/articles/s41586-026-10410-0

Ovsyannikova, de Mello, and Inzlicht, “Third-party evaluators perceive AI as more compassionate than expert humans,” Communications Psychology (2025). https://www.nature.com/articles/s44271-024-00182-6

Dohnány et al., “Technological folie à deux: feedback loops between AI chatbots and mental health,” Nature Mental Health (2026). https://www.nature.com/articles/s44220-026-00595-8

European Parliament, EU AI Act: first regulation on artificial intelligence (updated 2025). https://www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence?trk=organization_guest_main-feed-card-text

American Bar Association, ABA issues first ethics guidance on a lawyer’s use of AI tools (2024). https://www.americanbar.org/news/abanews/aba-news-archives/2024/07/aba-issues-first-ethics-guidance-ai-tools/